https://www.synyo.com/wp-content/uploads/SYNYO-NEWS-featured-image-NEW01007705EN.png

400

459

leo

https://www.synyo.com/wp-content/uploads/2017/09/synyo-logo.png

leo2025-01-01 10:47:112025-02-10 10:48:51BOND: Outcomes in Advancing Education, Tolerance and Heritage Preservation to combat Antisemitism

https://www.synyo.com/wp-content/uploads/SYNYO-NEWS-featured-image-NEW01007705EN.png

400

459

leo

https://www.synyo.com/wp-content/uploads/2017/09/synyo-logo.png

leo2025-01-01 10:47:112025-02-10 10:48:51BOND: Outcomes in Advancing Education, Tolerance and Heritage Preservation to combat Antisemitism

SmartDataLake: Stakeholder survey and key project insights

In an era of data-driven decision-making, a key requirement is high-quality data upon which the necessary insights may be based. While SmartDataLake intends to improve the rapidness at which deep insights may be achieved when operating on new, large-scale datasets, the question is how this workflow can even be improved and what the specific requirements are.

Overall, the SmartDataLake project consists of both research partners, driving the research and innovation forward, as well as industrial partners, providing use-case driven requirements and evaluation of the developed solutions and components through feedback. As part of the requirements elicitation methodology, the use-case partners are involved throughout the whole project, starting with the elaboration of the use-cases and the derivation of the requirements, as well as the execution of a series of piloting activities, during which the feedback is collected based on the hands-on observations that were made. However, while the three use-case partners represent three versatile applications of the SmartDataLake solution, external inputs have also been collected in order to broaden the scope and to identify the needs from a broad range of relevant stakeholders.

The collection of additional requirements was facilitated through a questionnaire, targeting the two primary stakeholder groups of the SmartDataLake project, namely data analysts and data scientists, and went through the processes of collection feedback on their daily challenges and frequency of various data-related tasks and activities. Majority of the questions were focusing on acquiring insights in a) the various challenges they are facing, in order to identify gaps, b) categorize the tools that are being used, in order to streamline the development to be as much inline with the currently applied processes as possible, and c) collect input on the importance of the various challenges, in order to be able to prioritize accordingly.

Here, we reproduce a few key insights that were gained as part of the analysis of the answers received to the questionnaire.

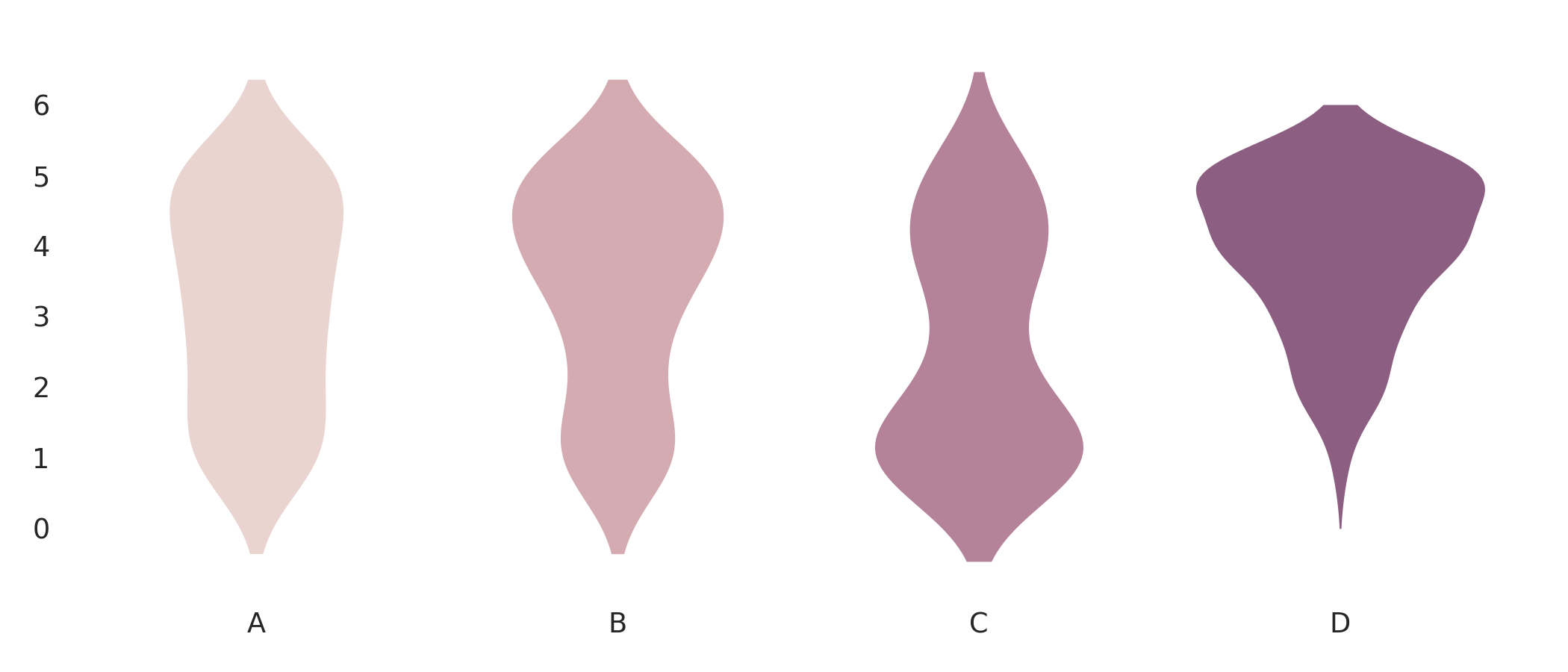

While most of the insights achieved generally confirmed the overall industry trends, where, e.g., Python is dominating the landscape, due to the broad range of excellent frameworks and libraries available, it was positive to see that there is also a strong application of open and web data, as illustrated in the below figure. This could imply that past efforts to make open data available and findable are creating benefits for the end-user and emphasizing the continuation of these efforts.

- Proprietary data created by your organization

- Proprietary data generated by your organization’s systems/products

- Proprietary data purchased from third parties

- Open/Web/Crowdsourced data

Another aspect emphasized, or rather reaffirming as mentioned before, by the answers was that the questionnaire participants were still primarily using traditional and well-established tool; e.g., a combination of Python based tools such as Jupyter, Pandas and Matplotlib, and less frequently than their higher-level derivatives such as Seaborn and other tools built on top of them.

From a process point of view, majority of the participants responded that their data collection, processing and analysis work is mainly performed on an ad-hoc basis. While not highly surprising, this trend does highlight and emphasize the need for a high intuition of the tools to be developed, such that the consequence of switching between tasks is reduced. In the SmartDataLake, besides focusing on the documentation of the various tools and components, this is materialized through a strong focus on visual support being baked in into most of the components, thus, maintaining a certain level of familiarity, independent of the dataset being investigated. Further, partially driven by the feedback collection, the individual components are intended to work both in unison, as an overarching data ingestion, processing and analysis pipeline, but also as a self-contained unit, for use-cases where specific functionality is needed and integrated into new or existing systems.

Finally, when asked about concerns and barriers with regard to integrating new systems into existing workflows, the highest rated concerns included the cost of the migration to a new tool, both in terms of time and resources. Both aspects are approached by the project by focusing on integration of existing tools, e.g., Jupyter Notebook to a large extend, and to make the component as flexible as possible while at the same time act as an efficient replacement for activities that typically require manual effort.

The questionnaire will be open throughout the full duration of the project, in order to monitor how the landscape develops over time, and can be accessed using the link below.

Links

https://www.surveyly.com/p/index.php/survey/index/sid/515498/newtest/Y/lang/en

Keywords

Big data, data lake, smart, questionnaire, requirements