https://www.synyo.com/wp-content/uploads/SYNYO-NEWS-featured-image-NEW01007705EN.png

400

459

leo

https://www.synyo.com/wp-content/uploads/2017/09/synyo-logo.png

leo2025-01-01 10:47:112025-02-10 10:48:51BOND: Outcomes in Advancing Education, Tolerance and Heritage Preservation to combat Antisemitism

https://www.synyo.com/wp-content/uploads/SYNYO-NEWS-featured-image-NEW01007705EN.png

400

459

leo

https://www.synyo.com/wp-content/uploads/2017/09/synyo-logo.png

leo2025-01-01 10:47:112025-02-10 10:48:51BOND: Outcomes in Advancing Education, Tolerance and Heritage Preservation to combat Antisemitism

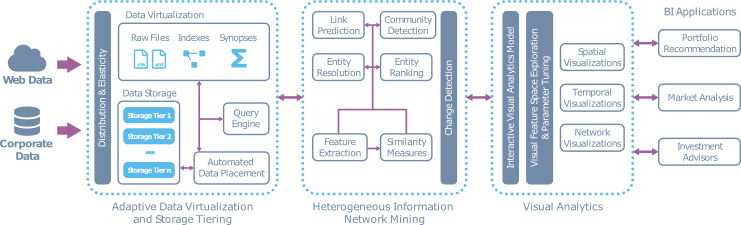

SmartDataLake: Data virtualization, heterogenous network mining, visual analytics and how they relate to each other

The SmartDataLake project targets to solve key challenges when it comes to the handling, processing and analysing large data sets. These challenges include handling and processing of the data, the analysis and insight generation, and finally a visual analytics layer that facilitates the communication of the results. Together, they enable the user to rapidly go from raw data to actual insights.

System Architecture

The system architecture for the SmartDataLake project consists of three key components, each broken down into individual modules, providing their dedicated functionality. Generally, each module is implemented as a micro-service, with the intention of allowing the user to mix and match the functionality as and when they need it, while the component attribution is primarily used to provide a logical breakdown of the functionality into appropriate activities that the user is assumed to be able to perform. An overview of the components and their individual modules, or microservices where applicable, are illustrated in the figure and key functionality is described below.

Each of the three components, reflects a key step of the data processing pipeline; data ingestion/handling, data analysis and data visualization, and improves on each of the activities. Finally, as illustrated on the right-hand side of the figure, the project also considers three business use-cases, which have been used to derive requirements for the various components, and will be used as validation during the piloting part of the project, to be executed in the final phase of the project. When combined as a processing pipeline, the component enables a user to perform end-to-end discover and investigation based semi-structured, raw data, gaining early insights.

Adaptive data virtualization and storage tiering

The data management layer, also called SDL-Virt, focuses on three main aspects; 1) the ingestion of arbitrary data formats, e.g., XML, JSON, CSV, so generally applied data format, and enabling the user to operate on them though an SQL-like language. 2) to efficiently using the available hardware, CPU and GPU, to perform queries and 3) to efficiently create query plans on the fly. This layer essentially enables the user to ingest data and enable them to work on the data efficiently and through means that they are familiar with.

Heterogeneous information network mining

The analysis layer, also referred to as SDL-HIN, converts the data into a heterogeneous information network that then enables the user to perform various analytical tasks on the data, such as joining entities across datasets, ranking of the entities based on their connectivity, searching for similar entities, based on their attributes or similarities of their inter-connectivity. Therefore, the main purpose of this layer is to efficiently generate insights into the ingested data.

Visual analytics

Finally, there is the data visualisation layer, which provides the user with interactive feedback and results based on their actions and parameter choices, which are propagated to the analytics modules, in order to provide continuous feedback on the intuition that is being applied and allows the user to explore that data upon which they are working on.

Next steps

Over the course of the project, each of the mentioned components will be further developed and their performance improved, and, as mentioned, evaluated and benchmarked on an individual basis during a ten-month long pilot. The pilot, which will be executed during the last phase of the project, will enable the use-case partners of the project to apply the components of their use-cases and to provide feedback on the functional aspects of the components, their usability, in terms of e.g., manual effort and the intuitiveness of the tools, and to a large extend to evaluate the performance of the components when use to evaluate large scale datasets.

Further details about the system architecture and the components may be found in Deliverable D1.2: System architecture, available at the link below, while each of the three components will be document in their respective public deliverables by the end of the project, and will be made available on the project website, also listed below.

Links

https://smartdatalake.eu/wp-content/uploads/2013/09/SmartDataLake-D1.2-System_architecture.pdf

Keywords

Big data, data lake, smart, system architecture, microservices, distributed